Week 11: Assumptions of Linear Regression

POL272 Quantitative Methods for Social Science Research

Where are we?

Where are we?

- We have covered many of the basic uses of linear regression

- Linear regression with one X variable

- Linear regression with multiple X variables

- OLS is the most popular estimator of the linear model in applied work

- OLS is based on a series of

assumptions- When these assumptions are not met, we will face problems

Assumptions of linear regression

Assumptions of linear regression

Recall the linear model:

\[ Y_i = \beta_0 + \beta_1X_i + u_i \]

For the OLS estimator of the parameters \(\beta_0\) and \(\beta_1\) to be appropriate four key assumptions have to be satisfied:

Conditional Mean Independence Assumption:\(E(u_i|X_i) = 0\)

$(X_i, Y_i)$ are i.i.d.:\((X_i, Y_i), i=1, \dots, n\) are i.i.d.Large outliers are unlikelyThere is no perfect multicollinearity.

Assumption 1: Conditional Mean Independence

Assumption 1

Conditional Mean Independence Assumption means that the conditional distribution of \(u_i\) given \(X_i\) has a mean zero.

Asserts that all other possible factors that are currently contained in \(u_i\) are unrelated to \(X_i\).

Can be written as \(E(u_i|X_i) = 0\) or \(corr(X_i, u_i) = 0\)

i.e. For a given value of X, the expected value of the error term is zero

This is essentially a restatement of

omitted variable bias\(\rightarrow\) we appealed to this type of motivation when we first introduced multiple regression

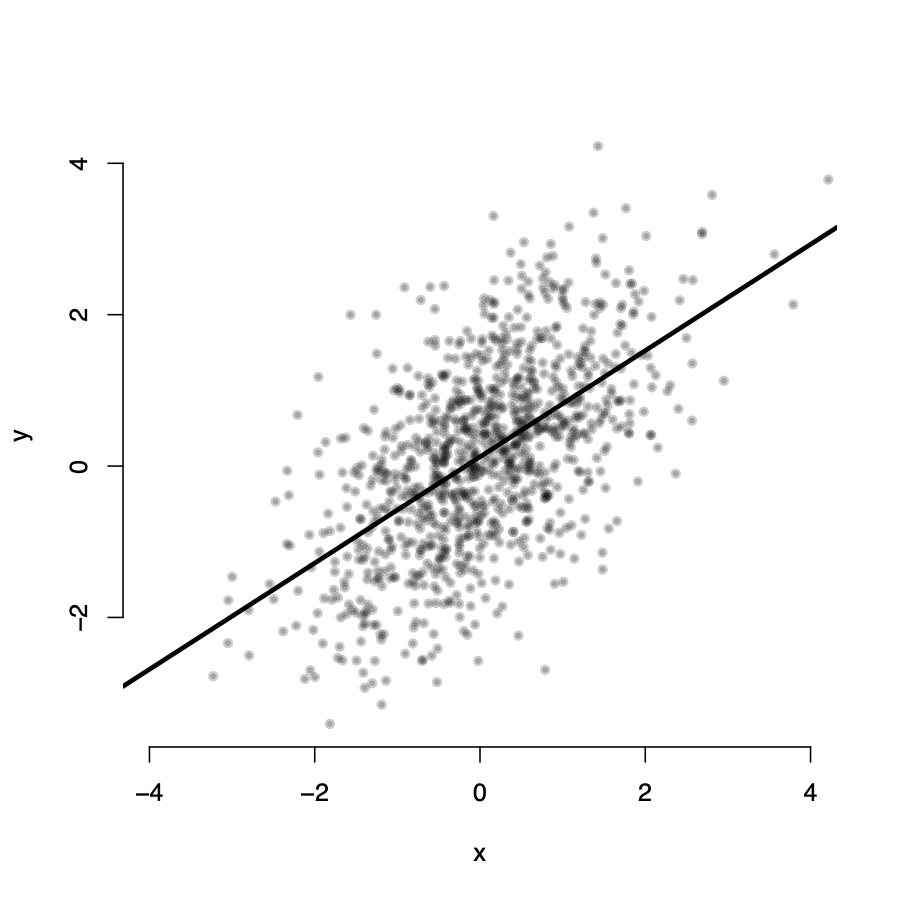

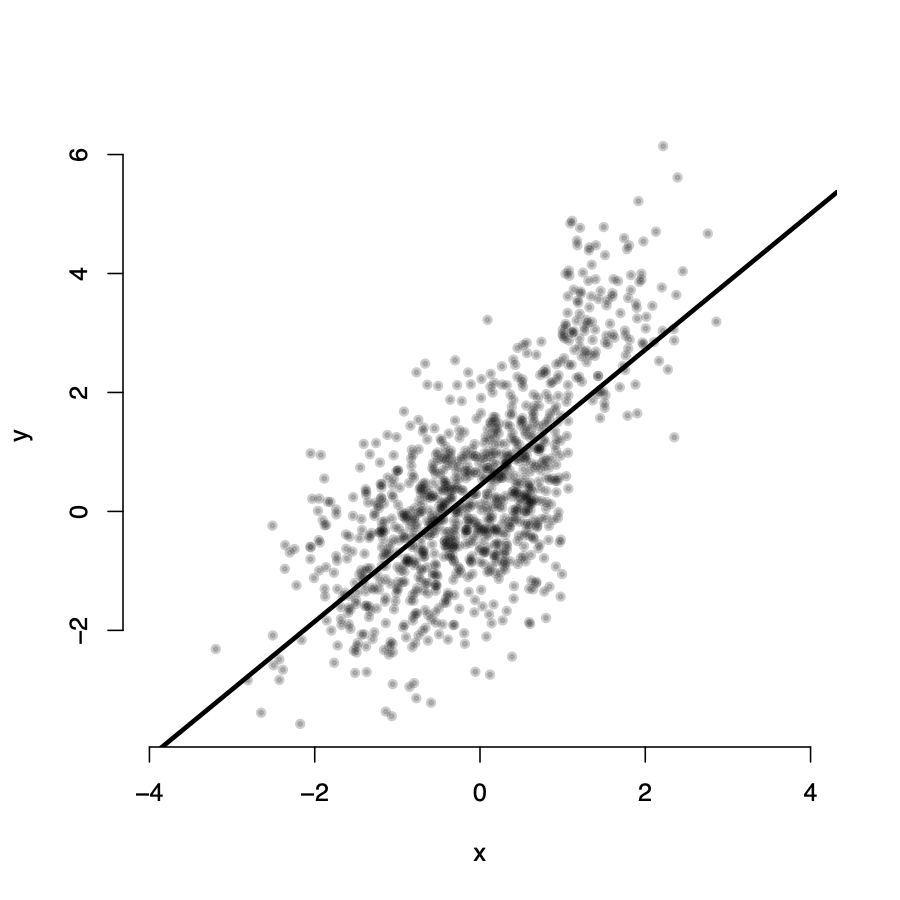

Assumption 1: \(E(u_i|X_i) = 0\)

Assumption 1: \(E(u_i|X_i) = 0\)

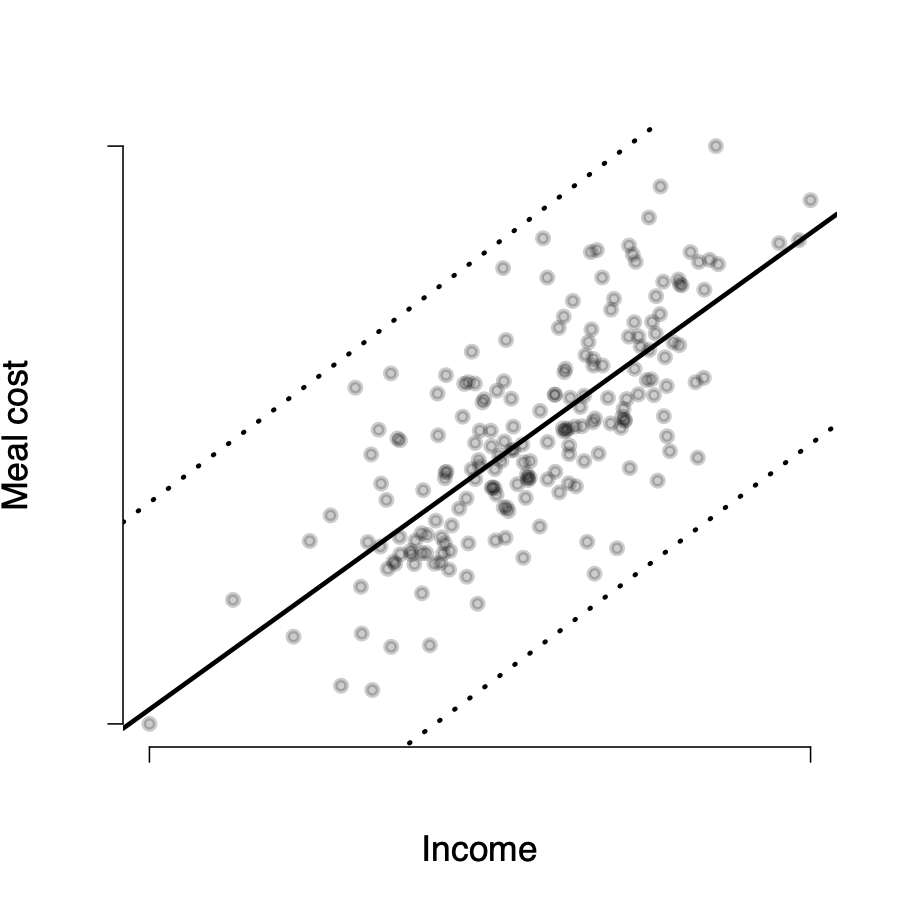

Assumption 1: \(E(u_i|X_i) = 0\)

\(Y_i\) seems randomly distributed around the line for all values of X

\(\rightarrow\) in expectation, for any value \(X_i\), the value of \(u_i = 0\)

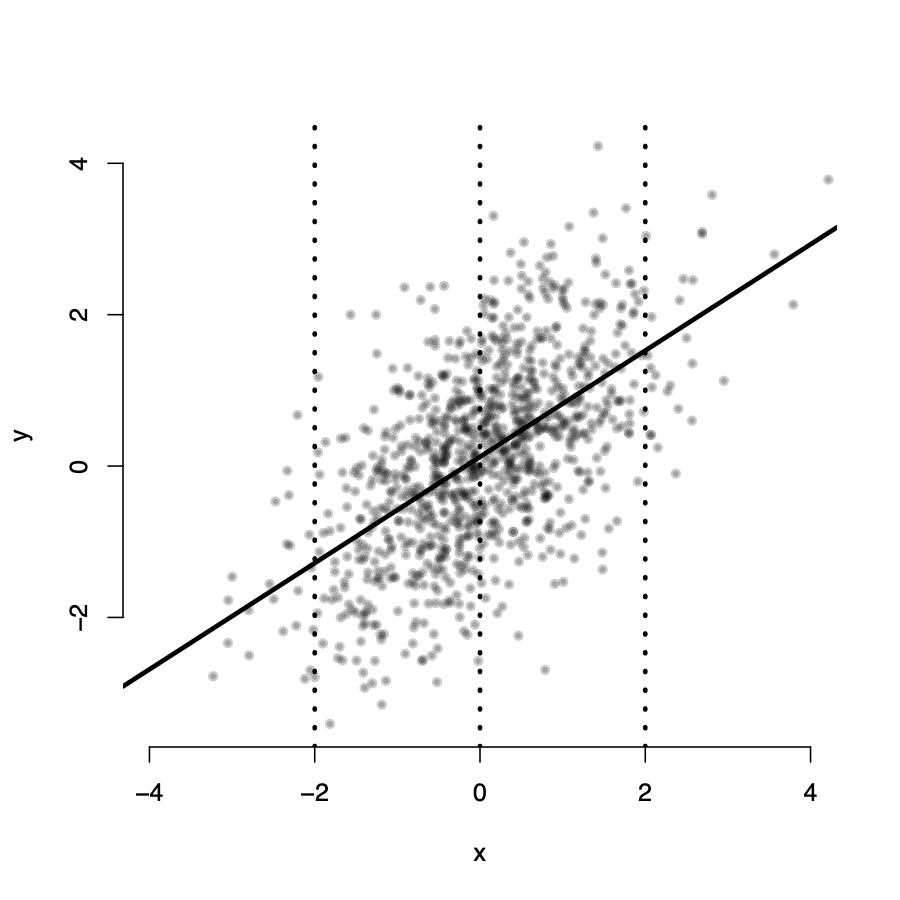

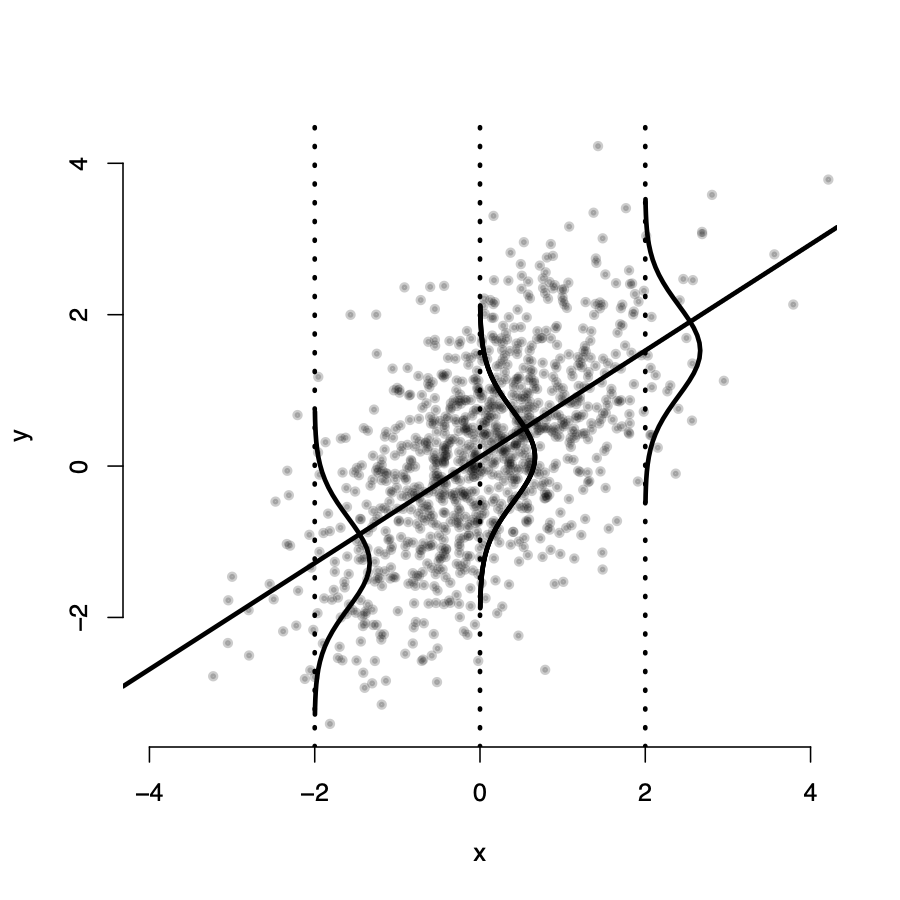

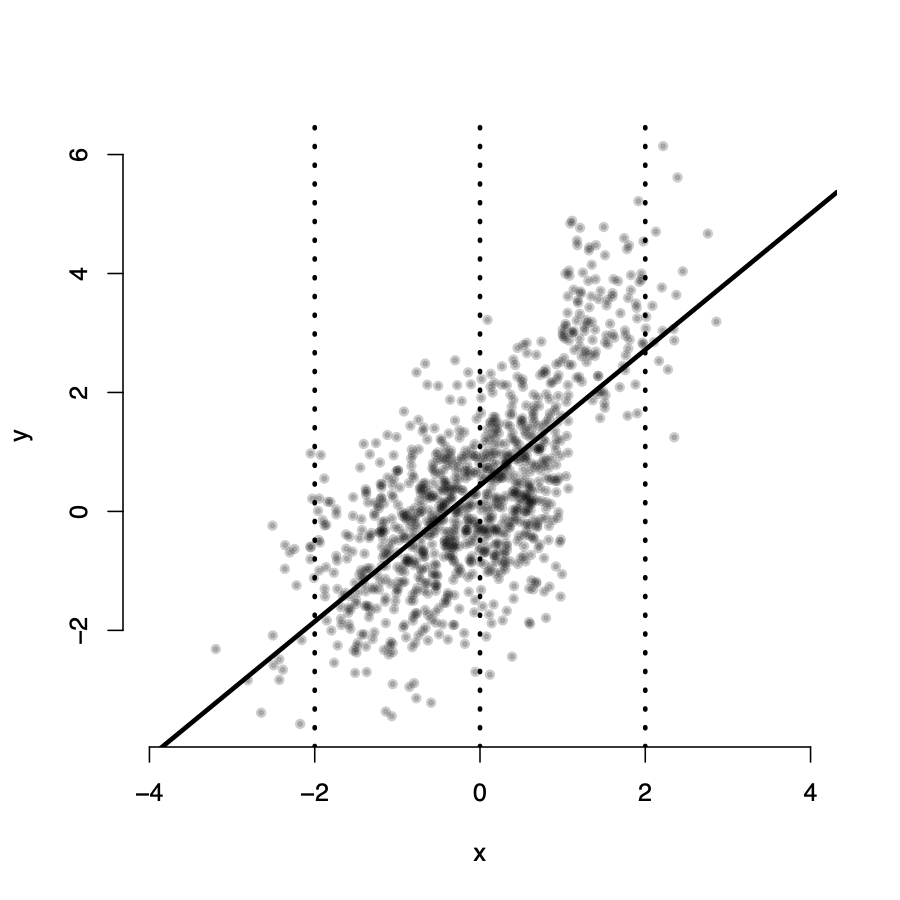

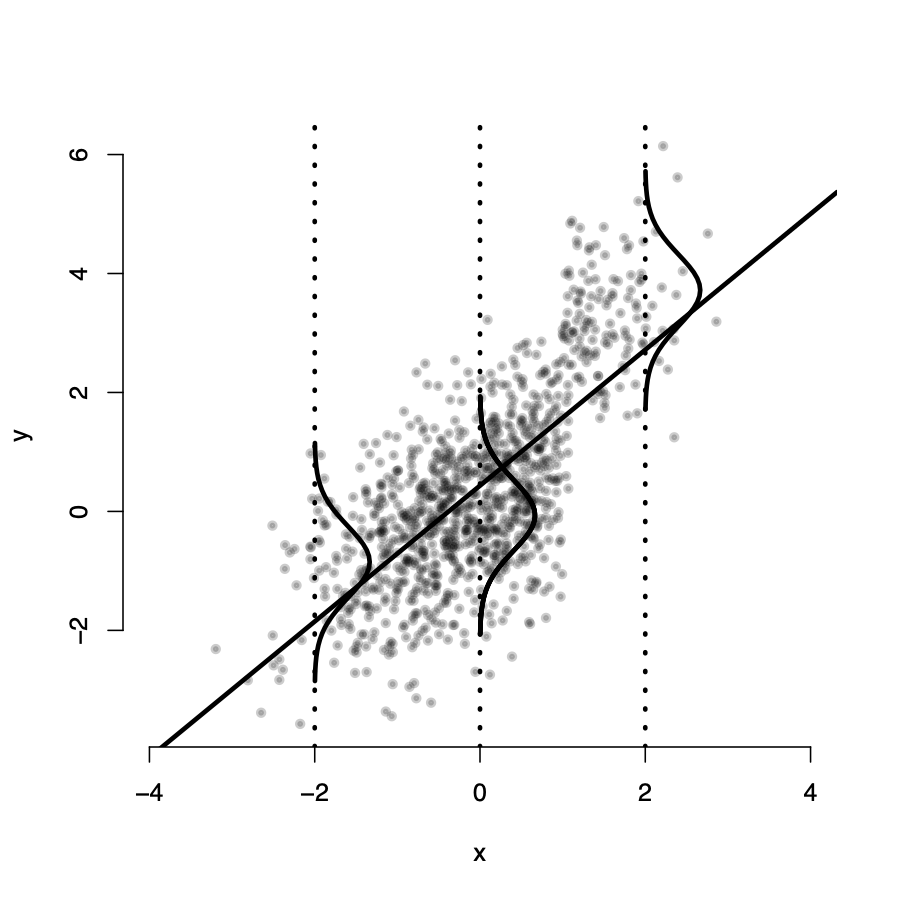

Assumption 1: \(E(u_i|X_i) \neq 0\)

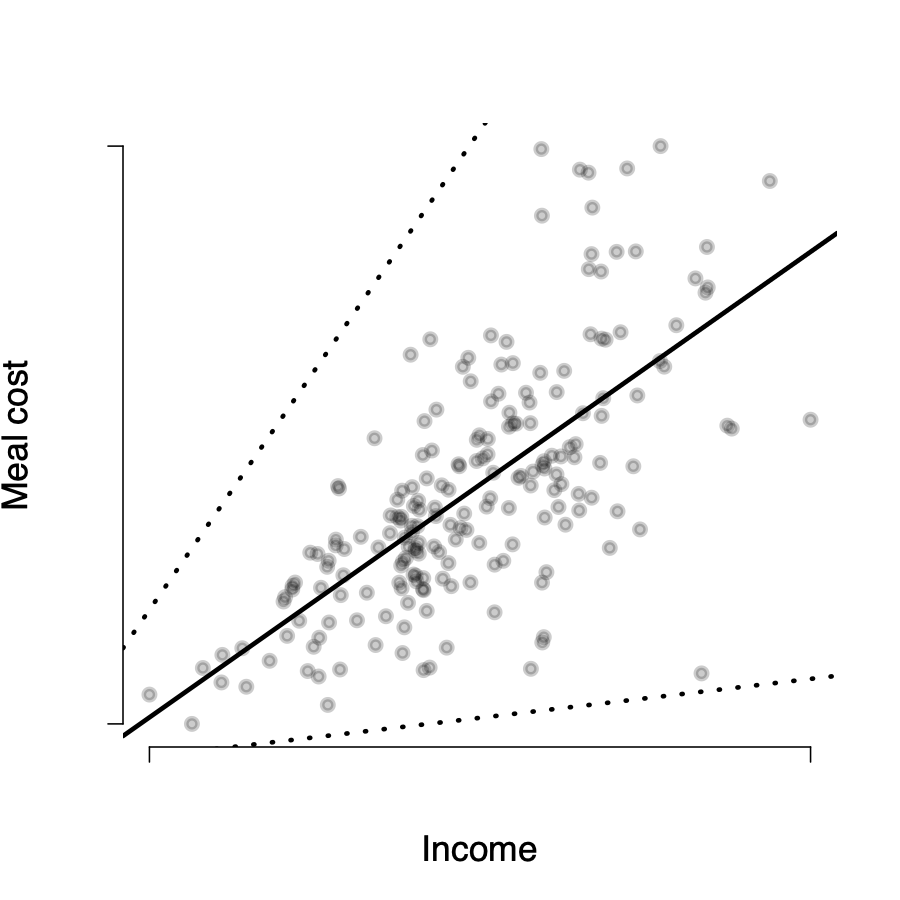

Assumption 1: \(E(u_i|X_i) \neq 0\)

Assumption 1: \(E(u_i|X_i) \neq 0\)

Assumption 1: \(E(u_i|X_i) \neq 0\)

\(Y_i\) is not randomly distributed around the line for all values of X

\(\rightarrow\) the expected value of \(u_i\) is not zero for all \(X_i\) values

\(\rightarrow\) it seems we have omitted an important variable from our model

Randomized experiments and \(E(u |X) = 0\)

In a

randomized controlled experiment, subjects are randomly assigned to the treatment group (\(X=1\)) or to the control group (\(X=0\))If random assignment is correctly implemented it will be done independently of all personal characteristics of the subject

Random assignment makes \(X\) independent of all possible confounders (Z)

- For OVB to be a problem, Z must correlated with X and Y

- Randomization breaks the relationship between X and Z

- This implies that X is therefore independent of u: \(E(u |X) = 0\)

Observational data and \(E(u |X) = 0\)

In

observational data, \(X\) is not randomly assignedWe try to assess whether \(X\) is as good as randomly assigned conditional on other variables

- e.g. Conditional on education, is income as good as randomly assigned?

- e.g. Conditional on East-West, is the proportion of immigrants randomly assigned?

How convincing is a causal claim based on observational data?

We have to make this judgement for each given empirical application with observational data

Assumption 2: X and Y are i.i.d

Assumption 2: \((X_i, Y_i), i = 1,...,n\) are i.i.d

- \((X_i, Y_i), \ i=1, \dots, n\) are independent and identically distributed (i.i.d.) across observations

Independent: \(Y_1\) gives no information about the value of \(Y_2\)Identically distributed: Distribution of \(Y_i\) is the same for all \(i\)

- If we draw observations using simple random sampling from a single large population, then \((X_i, Y_i), \ i=1, \dots, n\), are i.i.d.

Assumption 2: \((X_i, Y_i), i = 1,...,n\) are i.i.d

A good example of i.i.d. data is survey data drawn from a random subset of the population.

Often this is not the case with observational data:

Time seriesdata: observations of the same unit over time- Are observations of the UK in 1980 and 1981 ‘independent’?

Clustereddata: observations grouped within higher units- Are observations of different students from the same school ‘independent’?

We will not explore time-series and spatial dependence in data in this module, but it is one of the advanced topics that you will encounter regularly when working with data

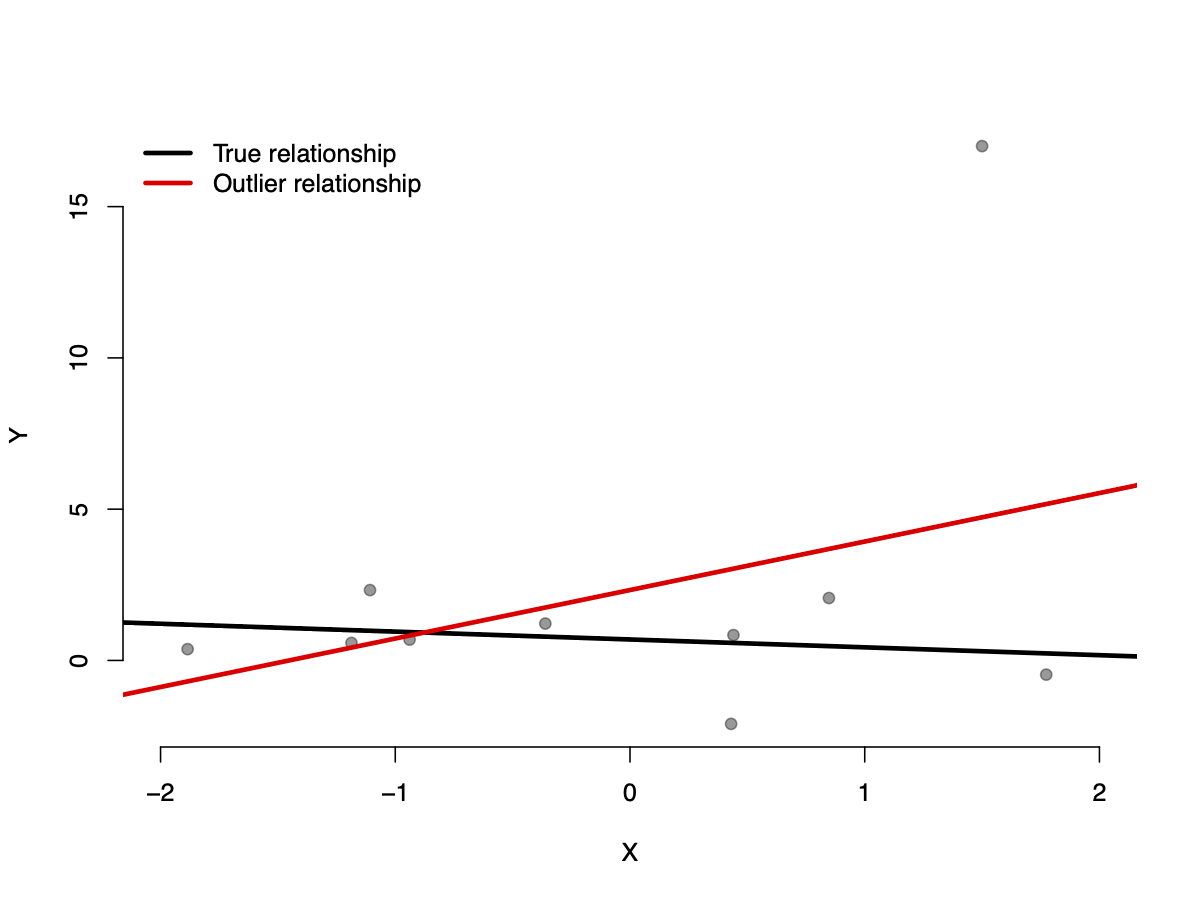

Assumption 3: Large outliers are unlikely

Assumption 3: Large outliers are unlikely

- Observations with values of \(X_i, Y_i\), or both that are far outside the usual range of the data are unlikely

- Large outliers can make OLS regression results misleading

- One source of outliers is data entry errors (e.g. typos in the data entry stage)

- If this assumption holds then statistical inference using OLS won’t be dominated by a few observations

Assumption 3: Large outliers are unlikely

Assumption 4: No perfect multicollinearity

Assumption 4: No perfect multicollinearity

Perfect multicollinearity\(\rightarrow\) when one X variable is a perfect linear function of another X variableImagine you would like to know the association between height (\(X\)) and shoe size (\(Y\))

- \(X_1\) is height measured in inches

- \(X_2\) is height measured in cm

You cannot estimate the model \(Y = \beta_0 + \beta_1X_1 + \beta_2X_2\)

\(X_1\) and \(X_2\) are perfectly multicollinear: \(X_2 = X_1*2.54\)

\(\rightarrow\) it is impossible to compute the OLS estimator, we have to drop one of these variables from the model

Assumption 4: No perfect multicollinearity

Intuition: in multiple regression the coefficient of one of the regressors is the effect of a change in that regressor, holding the other regressors constant.When Shoe Size is regressed on \(\text{height(in)}\) and \(\text{height(cm)}\)

- \(\beta_{\text{height(in)}}\) is the effect on shoe size of a change in height(in), holding constant height(cm).

- \(\beta_{\text{height(cm)}}\) is the effect on shoe size of a change in height(cm), holding constant height(in).

This is not logical and cannot be estimated using OLS

A general solution is to modify the list of X variables

Imperfect multicollinearity

Imperfect multicollinearity\(\rightarrow\) a “high” degree of correlation between two X variables, but not perfect linear combinationsImagine you want to know the association between education and vote choice, and between intelligence and vote choice

- \(X_1\) is education

- \(X_2\) is intelligence

- \(X_1\) and \(X_2\) will be very highly correlated (probably)

Does not prevent estimation of \(\beta\) with OLS, but:

- Difficult to separately identify the influence of the Xs

- Standard errors will be large and t-statistics small

There is no general solution

- Collect more data

- Drop some variables

- Transform some variables

Using the Least Squares Assumptions

When these assumptions hold, in large samples the OLS estimators have normal sampling distributions

This allows us to develop methods for hypothesis testing and confidence intervals

Violations of assumptions are very important \(\rightarrow\) call into question statistical inference using linear regression.

- May suggest problems with \(\hat{\beta}\)

- May suggest problems with \(SE(\hat{\beta})\)

Assessing violations of regression assumptions

Assessing violations of regression assumptions

What makes a study that uses multiple regression reliable or unreliable?

We can assess the validity of an empirical study by focussing on two classes of problems:

- Threats to the

internal validity. - Threats to the

external validity.

- Threats to the

Relates to published work using linear regression, final coursework for this module, your dissertations, etc

Internal and external validity

Tip

The statistical inferences about causal effects are valid for the population being studied

Tip

The statistical inferences can be generalized from the population and setting studied to other populations and settings

Threats to Internal Validity

Threats to Internal Validity

Internal validity consists of two components:

Estimators of parameters should be unbiased & consistent

Unbiased: In expectation, \(\hat{\beta}\) represents the ‘true’ valueConsistent: As n increases, \(\hat{\beta}\) converges to the ‘true’ value

Hypothesis tests & confidence intervals should have the desired significance level

- If \(\alpha = 0.05\), in 100 tests where the null hypothesis is true, I should reject the null 5 times

These threats lead to failures of the least squares assumptions.

Internal validity: \(\hat{\beta}\)

Reasons for \(\hat{\beta}\) to be biased

\(\hat{\beta}\) may be biased (even in large samples) due to:

- Omitted Variable Bias

- Mis-specification of the functional form

- Measurement error (in explanatory and outcome variables)

- Sample selection

All four reasons are due to a correlation between the error term and the regressor – violation of Assumption 1.

Omitted Variable Bias

Review: OVB is present when we forget to include in our model a variable that determines Y and is related to one or more X- The bias persists even in large samples, leading to the OLS estimator to be inconsistent.

- Recall that an estimator is consistent when it converges to the parameter that it is estimating as sample size increases.

Dealing with omitted variable bias

- When variables measuring the omitted factors are available we can include them in our model, thus

controllingfor them. - We should be careful to include only relevant variables, those that belong in our model theoretically.

- Including the variable when it does not belong reduces the precision of the estimators of the other regression coefficients.

Should I include more variables in my regression?

- Be specific about the coefficient or coefficients of interest.

- Use theoretical reasoning to identify the most important potential sources of omitted variable bias. You should arrive at a base specification and some additional control variables.

- Test whether additional control variables have nonzero coefficients.

- Provide tables showing the effect of including controls on the \(\beta\) of interest. How do your results change if you include questionable control variables?

OVB when lacking adequate controls

If controls are unavailable we cannot add them to the model

Randomized experiment: assignment of X is controlled by the researcher and will be unrelated to other X variables by constructionInstrumental variables: variables that are correlated with X but not with Y

Misspecification of the functional form

If the true population regression function is nonlinear but the estimated regression is linear, then this

functional form misspecificationmakes the OLS estimator biased.We can deal with this by modelling nonlinear relationships:

- Interaction terms: \(Y = \alpha + \beta_1X_1 + \beta_2X_2 + \beta_3(X_1* X_2)\)

- Polynomial terms: \(Y = \alpha + \beta_1X_1 + \beta_2X_1^2\)

- Non-linear transformations: \(Y = \alpha + \beta_1log(X_1)\)

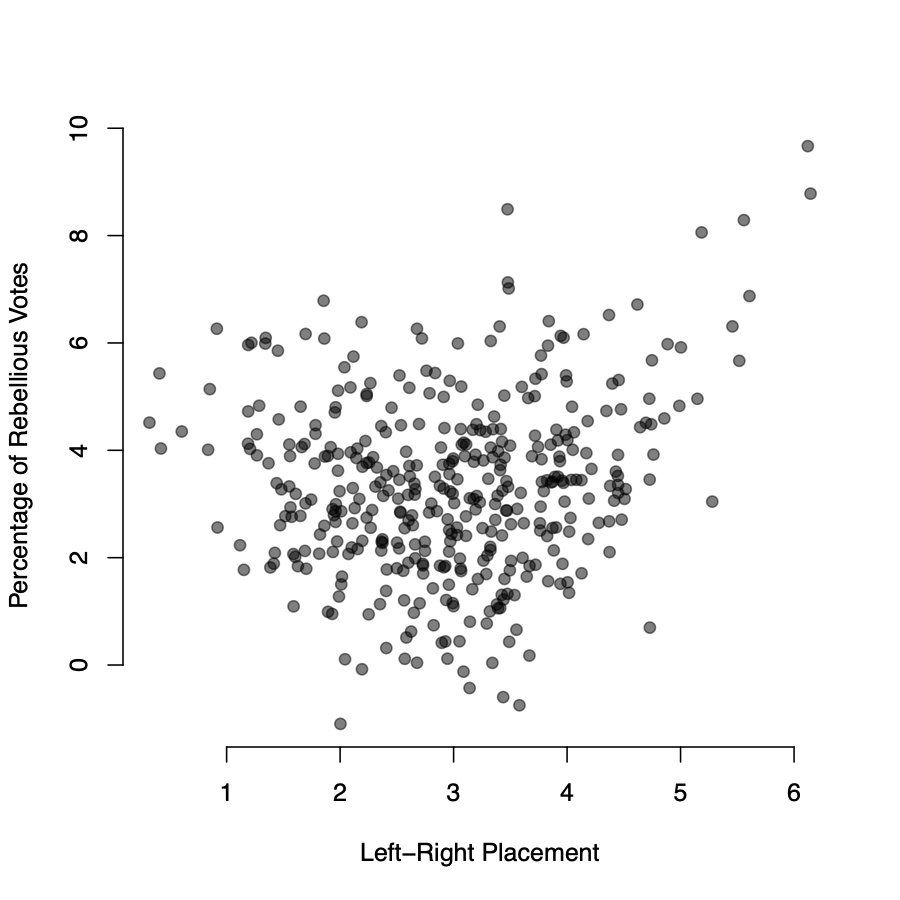

Misspecification of the functional form

Does ideology determine `rebelliousness’ in parliamentary votes? Theory: governing party MPs’ left-right position should predict votes against government policy. Context: votes of 400 Labour MPs in the House of Commons in 2005.

Dependent variable ($Y$): Percentage of votes in which the MP voted against the government lineIndependent variable ($X_1$): MP’s self placement on a left right scale (0 = most left, 10 = most right)Control variable ($X_2$): Years MP has been in parliament

\[ \text{Rebel Votes}_i = \beta_0 + \beta_1X_1 + \beta_2X_2 + u_i \]

Misspecification of the functional form

Misspecification of the functional form

| Percentage of rebel votes | |

|---|---|

| Left-Right Placement | 0.393 |

| (0.460) | |

| Experience | 1.427\(^{***}\) |

| (0.044) | |

| Constant | 2.435\(^{***}\) |

| (0.131) | |

| Observations | 400 |

| \(R^{2}\) | 0.734 |

| Note: | \(^{*}p<0.1\); \(^{**}p<0.05\); \(^{***}p<0.01\) |

\(\rightarrow\) no relationship between ideology and rebellion.

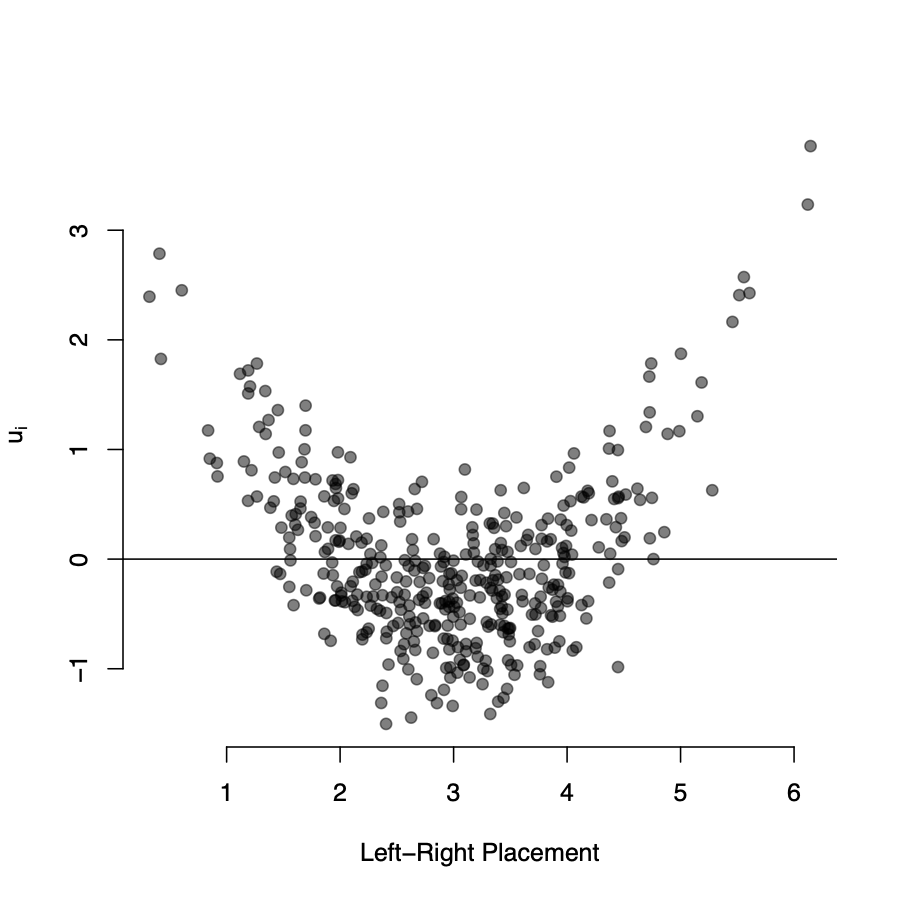

Misspecification of the functional form

Is the assumption of a linear relationship reasonable?

- Theoretically: Labour MPs are more likely to defect the further they are (in either direction) from the government

- Empirically: we can examine the sample

residualsto detect non-linearity

\[ u_i = Y_i - \hat{Y}_i \]

- Conditional mean expectation assumption: \(E(u_i|X_i) = 0\)

Implication: In our sample, \(u_i\) should be distributed around zero for each value of X

Misspecification of the functional form

- We under-predict the rebelliousness of extremists

- We over-predict the rebelliousness of centrists

- The partial relationship between left-right placement and rebellion is clearly nonlinear

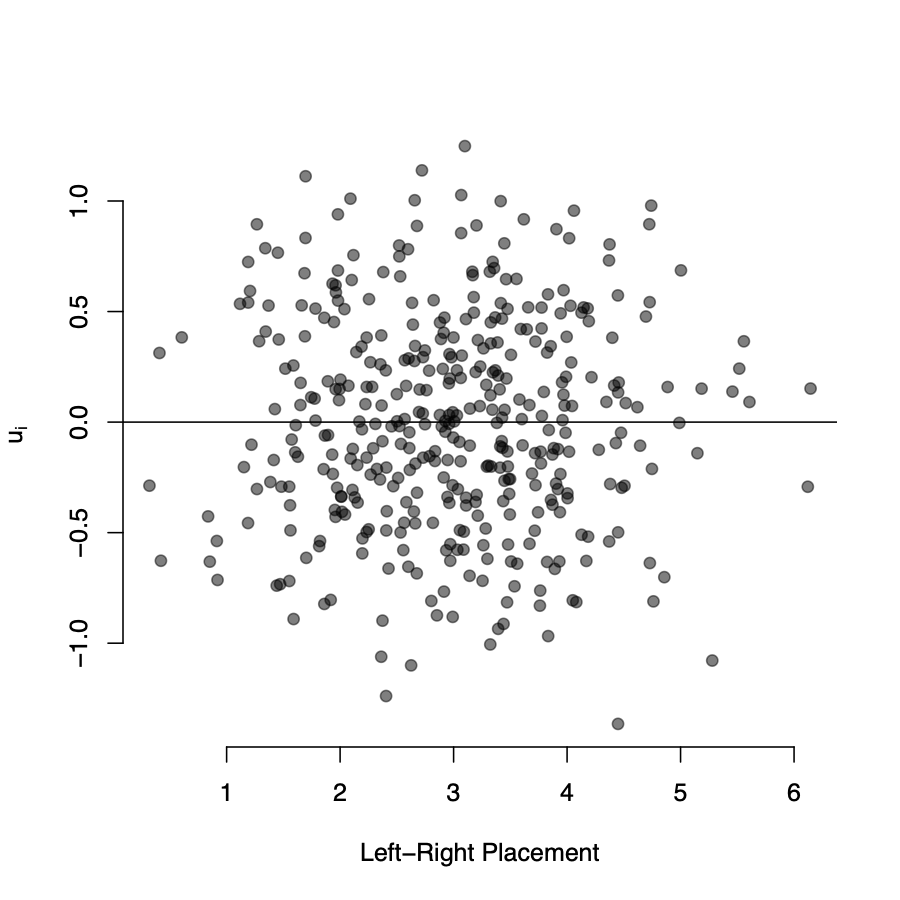

Misspecification of the functional form

\[ \text{Rebel Votes}_i = \beta_0 + \beta_1X_1 + \beta_2{X_1}^2+ \beta_3X_2 + u_i \]

| Percentage of rebel votes | ||

|---|---|---|

| (1) | (2) | |

| Left Right Placement | 0.393 | -3.172\(^{***}\) |

| (0.460) | (0.114) | |

| Left Right Placement\(^2\) | 0.458\(^{***}\) | |

| (0.018) | ||

| Experience | 1.427\(^{***}\) | 1.416\(^{***}\) |

| (0.044) | (0.027) | |

| Constant | 2.435\(^{***}\) | 6.206\(^{***}\) |

| (0.131) | (0.170) | |

| Observations | 400 | 400 |

| R\(^{2}\) | 0.734 | 0.898 |

| Note: | \(^{*}\)p$<$0.1; \(^{**}\)p$<$0.05; \(^{***}\)p$<$0.01 |

Accounting for non-linearity reveals the potential danger of misspecifying the functional form

- Model 1 suggests no partial linear relationship

- Model 2 suggests a strong partial non-linear relationship

Misspecification of the functional form

Let’s plot the residuals from model 2 against the L-R variable:

- The residuals are randomly distributed around zero

- There is no detectable “pattern” in the residuals

Measurement errors

- When we have errors in the measurement of X variables, we end up with biased OLS estimators

- Errors may arise from data entry mistakes and also from systematic misrepresentation of true underlying data

- Dealing with measurement error is complicated since we don’t usually know the underlying process of error generation

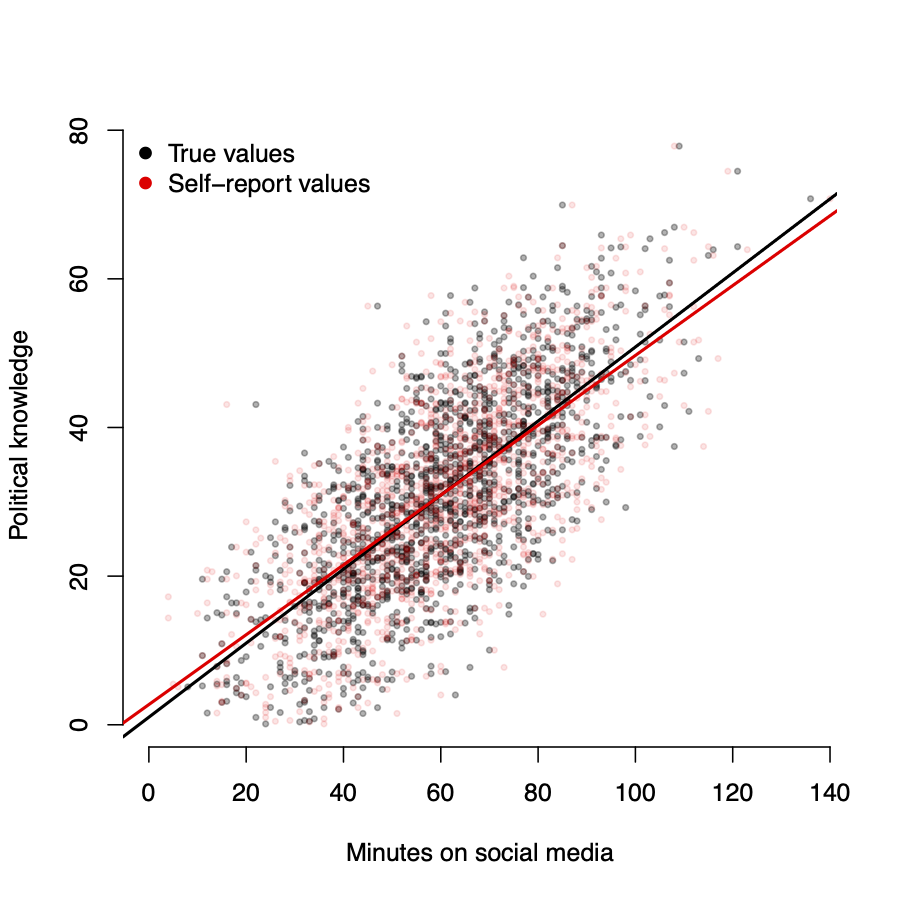

Measurement errors

What is the relationship between an individual’s use of social media and their level of political knowledge? We ask survey respondents how many minutes they spend on social media each day, and then test their political knowledge on a series of questions.

Dependent variable(\(Y\)): Political knowledge (-50 to 50)Independent variable(\(X_1\)): Reported daily use of social media (minutes)

Measurement errors

Let’s assume (naively) that respondents make random errors in the amount of time they spend on social media.

\[ \tilde{X_i} = X_i + w_i \]

- \(X_i\) is the true time respondent \(i\) spends on social media

- \(w_i\) is the random error when they report their social media use

- \(\tilde{X_i}\) is the variable we observe

If \(w_i\) is truly random, surely this won’t be a problem, right?

Wrong.

- Even when the measurement error in \(X\) is random, the OLS coefficient will be biased

Measurement errors

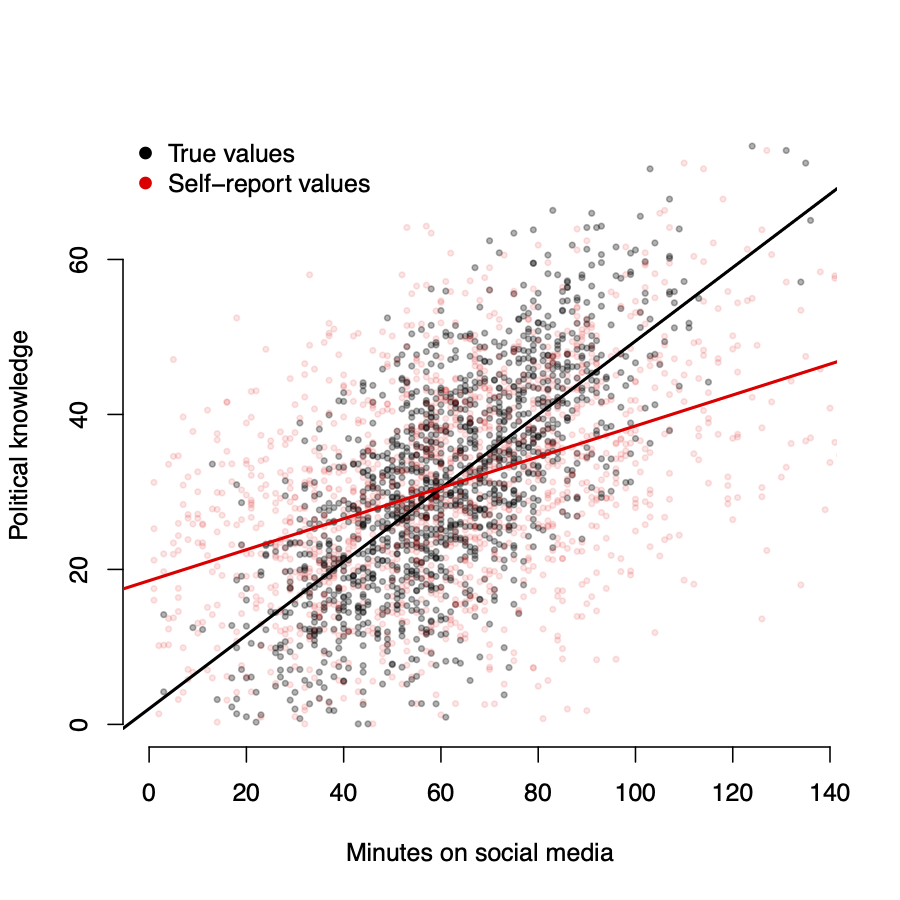

- Black \(\rightarrow\) true values of \(X\)

- Red \(\rightarrow\) reported values of \(\tilde{X}\)

- \(w_i \sim N(0, 5)\)

As measurement error increases, \(\hat{\beta}\) \(\rightarrow\) 0

Measurement errors

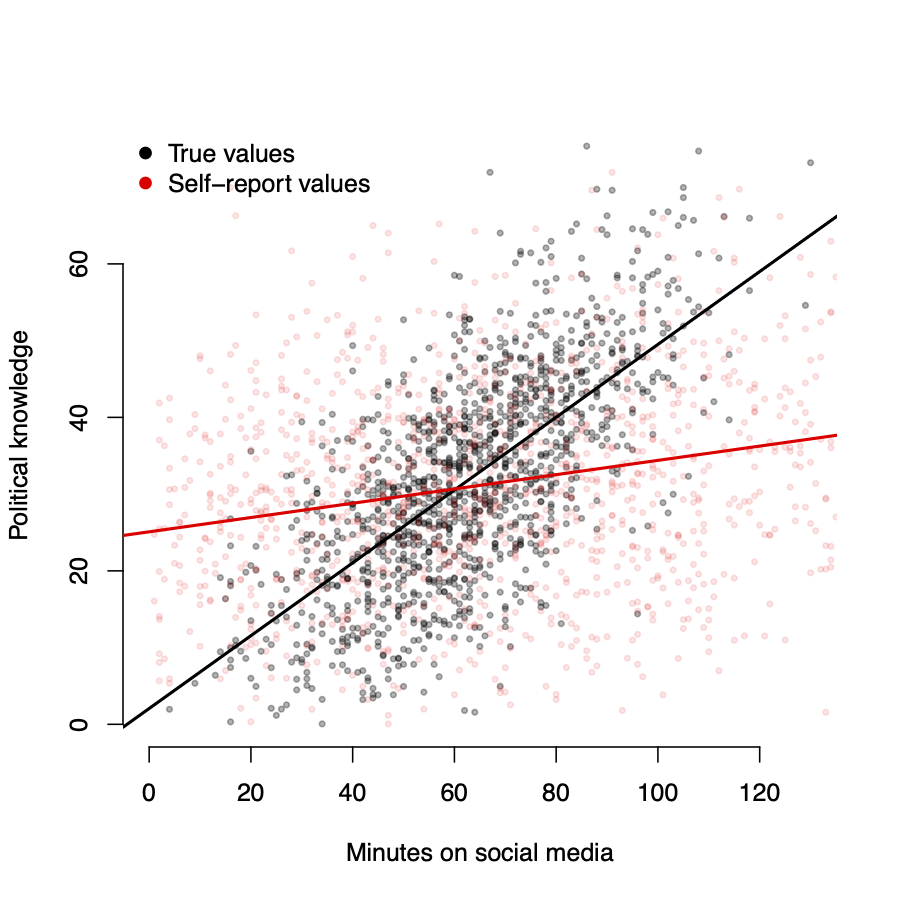

- Black \(\rightarrow\) true values of \(X\)

- Red \(\rightarrow\) reported values of \(\tilde{X}\)

- \(w_i \sim N(0, 15)\)

As measurement error increases, \(\hat{\beta}\) \(\rightarrow\) 0

Measurement errors

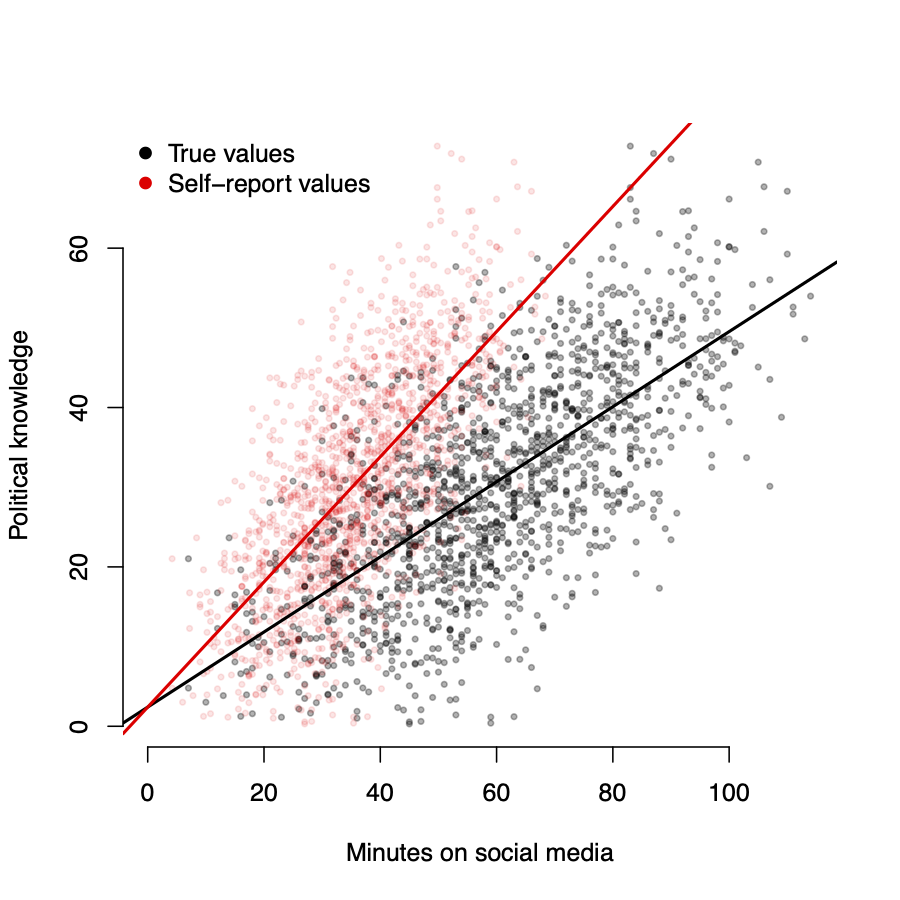

- Black \(\rightarrow\) true values of \(X\)

- Red \(\rightarrow\) reported values of \(\tilde{X}\)

- \(w_i \sim N(0, 25)\)

As measurement error increases, \(\hat{\beta}\) \(\rightarrow\) 0

Measurement errors

More likely is that respondents make systematic errors in stating the amount of time they spend on social media

- Imagine that all respondents report only 60% of the time they spend on social media:

\[ \tilde{X_i} = X_i*.60 \]

- \(\hat{\beta}\) will be biased up by 40%

If the error is non-random, \(\hat{\beta}\) will also be biased

Measurement errors

Solutions to measurement error in X, in order of frequency of use:

- Pretend there is no measurement error\(\ldots\)

- Get a better X measure

- Instrumental variables

- Second model for measurement error

Missing data and sample selection

Missing data are a common feature in any data analysis task.

- Data missing completely at random (unrelated to X or Y)

- \(\rightarrow\) reduced sample size (larger standard errors) but no bias.

- Data missing based on X

- \(\rightarrow\) reduced sample size (larger standard errors), lower external validity, but no bias.

- Data missing based on Y (not related to X)

- \(\rightarrow\) can introduce correlation between the error term and the regressors. The resulting bias in the OLS estimator is called

sample selection bias.

- \(\rightarrow\) can introduce correlation between the error term and the regressors. The resulting bias in the OLS estimator is called

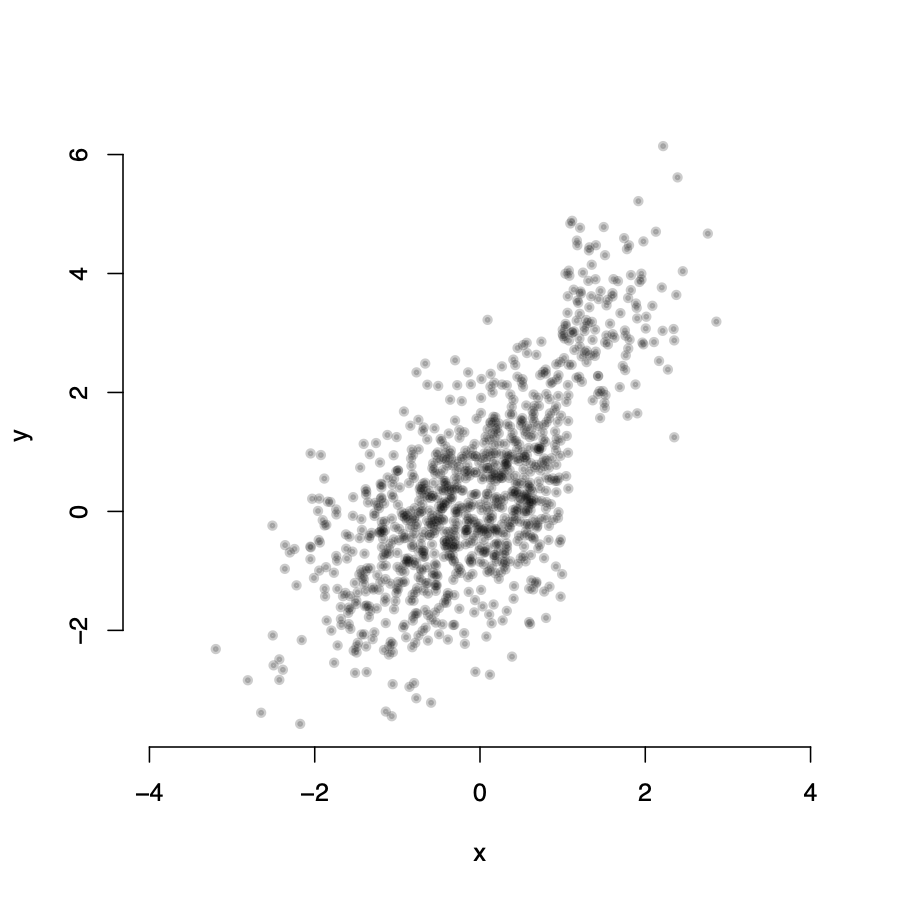

Missing data and sample selection

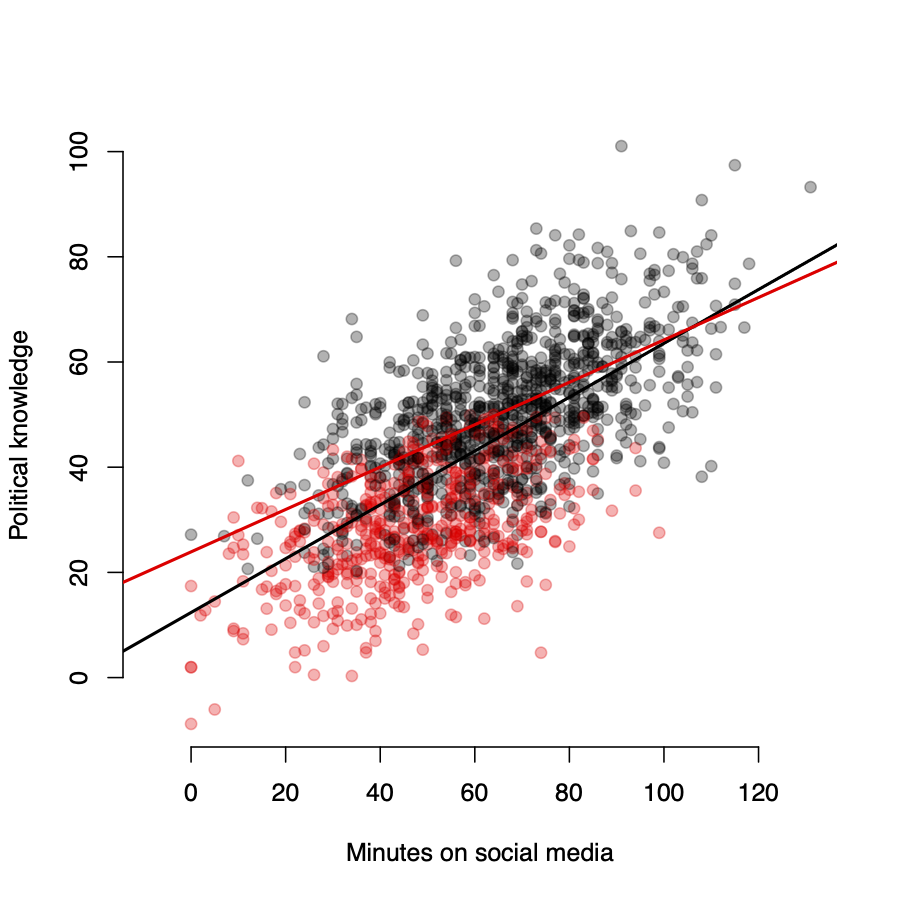

Let’s assume that people with low levels of political knowledge are on average less likely to respond to political surveys.

- Black \(\rightarrow\) observations in sample

- Red \(\rightarrow\) observations excluded from sample

- \(\hat{\beta}\) is biased downwards

If sample selection is based on Y, \(\hat{\beta}\) will also be biased

Dealing with missing data and sample selection

- If data is missing completely at random we can use

multiple imputationmethods to deal with the issue - If data is missing due to some selection on X, there is a family of methods dealing with such

truncatedandcensoreddata - We can use

selection modelsto deal with the sample selection bias

All of these approaches are beyond the scope of our course

Internal validity: SE(\(\hat{\beta}\))

Sources of inconsistency of OLS standard errors

Inconsistent standard errors are problematic. Even if \(\hat{\beta}\) is consistent and the sample size is large:

- Standard errors may be too big (or too small)

- \(\rightarrow\) hypothesis tests may reject the null hypothesis too often

- \(\rightarrow\) confidence intervals may be too narrow (i.e. not actually at the desired confidence level)

Inconsistent standard errors are usually due to:

- Heteroskedasticity

- Correlation of the error term across observations

Homoskedasticity vs Heteroskedasticity

Another assumption: The variance of the error term (\(\sigma^2\)) is the same for all units i, i.e. it does not depend on \(X_i\)

Homoskedasticity

\(Var(u_i | X_i)=Var(u)=\) constant \(\rightarrow\) does not depend on X

Income and expenditure on meals

We would like to know whether richer people spend more money on food than poorer people. We collect data on 1000 meals purchased by individuals with different income levels.

Dependent variable(\(Y\)): Cost of meal (£)Independent variable(\(X_1\)): Individual income (£ per year)

Homoskedasticity vs Heteroskedasticity

\(Var(u_i | X_i=x)=\)const.

\(Var(u_i | X_i=x)\neq\)const.

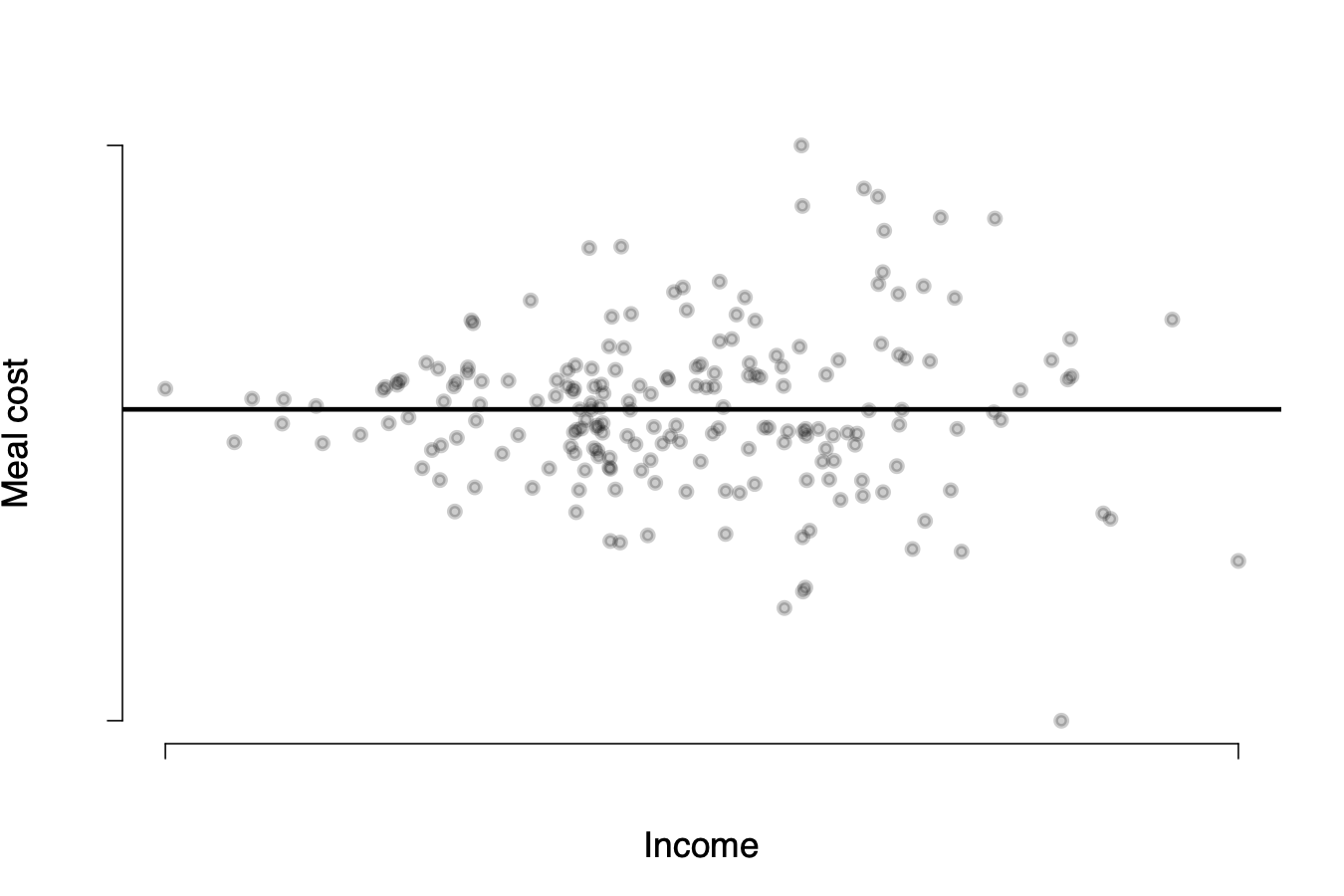

Detecting heteroskedasticity visually

We can detect heteroskedasticity visually by again plotting the residuals against our X variables:

\(\rightarrow\) when the distribution of \(u_i\) follows this “funnel” shape, that suggests the errors are heteroskedastic

Implications of heteroskedasticity

The good news:

Whether the errors are homoskedastic or heteroskedastic:

- \(\hat{\beta}\) is unbiased

- \(\hat{\beta}\) is consistent

The bad news:

If the homoskedasticity assumption is violated:

- \(t\)-statistics do not have a standard normal distribution

- Conventional standard errors will be too small

- Hypothesis tests will reject the null hypothesis too often

- Confidence intervals will be too narrow

Heteroskedasticity can lead to standard errors that are too small or too large. But we generally care less about overestimating the standard error.

Dealing with heteroskedasticity

Test for heteroskedasticity using the Breuch-Pagan test.

Intuition:- Fit a linear model: \(Y_i = \beta_0 + \beta_1X_1 + \beta_2X_2 + u_i\)

- Fit a linear model for the squared residuals: \(u_i^2 = \beta_{u0} + \beta_{u1}X_1 + \beta_{u2}X_2 + \gamma_i\)

- The \(H_0\) is hypothesis of homoskedasticity.

- If the X variables explain ``too much’’ of the variation in the residuals, reject the null hypothesis of homoskedasticity

- If p-value is below an appropriate threshold (e.g. \(p<0.05\)) then the null hypothesis of homoskedasticity is rejected

If heteroskedasticity is present, calculate

heteroskedasticity-robust standard errors

Testing for heteroskedasticity

\(\rightarrow\) there is evidence of heteroskedasticity in the meal cost data

Implementing heteroskedasticity correction in R

> library(sandwich)

> library(texreg)

# Use coeftest from the sandwich package to tell R to calculate

# heteroskedasticity-robust SEs (``HC3'')

> model2 <- coeftest(model1, cov = vcov(model1, type = "HC3"))

> screenreg(list(model1, model2))

==================================

Model 1 Model 2

----------------------------------

(Intercept) 0.89 0.89

(1.61) (1.61)

income 0.14 *** 0.14

(0.04) (0.13)

----------------------------------

R^2 0.25

Adj. R^2 0.24

Num. obs. 200

RMSE 17.22

==================================

*** p < 0.001, ** p < 0.01, * p < 0.05\(\rightarrow\) when adjusting for heteroskedasticity, \(\hat{\beta}_\text{income}\) is no longer significant

External validity

Threats to external validity

Potential threats to external validity arise from differences between the population and setting studied and the population and setting of interest.

- Differences in populations (e.g. testing new medication on animals while we are interested in its effect on humans).

- Differences in settings (e.g. effects of a job training programme might be different in the US than in France)

POL272